|

Hugh Zhang I'm currently a member of technical staff at a stealth AI startup. Previously, I worked as an AI researcher at Scale AI, and before that I was a PhD candidate at Harvard advised by David Parkes and supported by the NSF Graduate Research Fellowships Program and a Kempner Institute Graduate Fellowship. Even before that, I was a software engineer at Asana (gap year before college). In my spare time, I've been a lifelong Go player (in fact, seeing AlphaGo beat Lee Sedol was the origin of my interest in AI). I also co-founded the Gradient, a digital magazine focusing on AI. |

|

|

Research

My recent work focuses on evals, test-time compute, and post-training for LLMs. Previously, I worked on multi-agent reinforcement learning and game theory. * denotes equal or alphabetical ordering. |

|

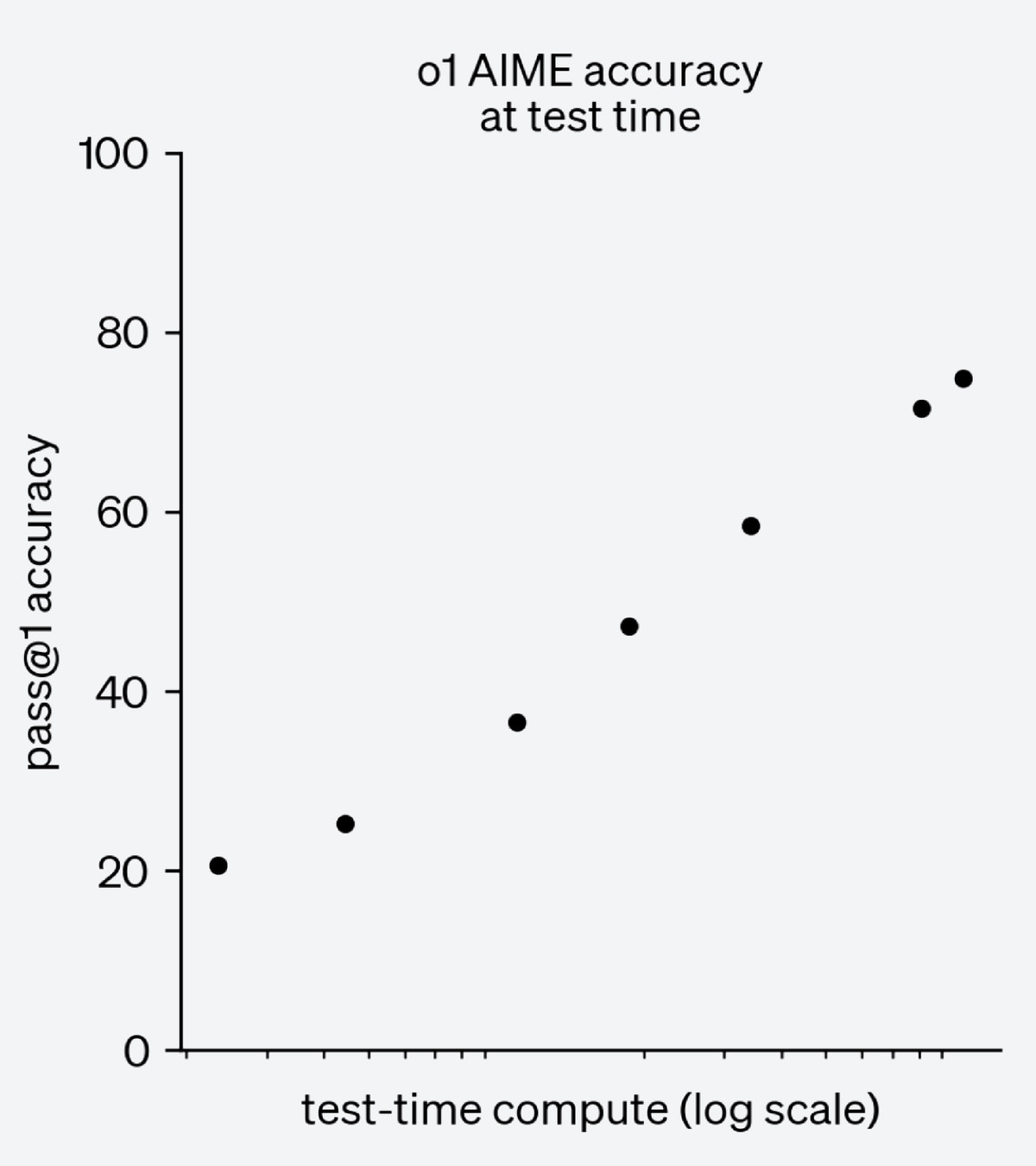

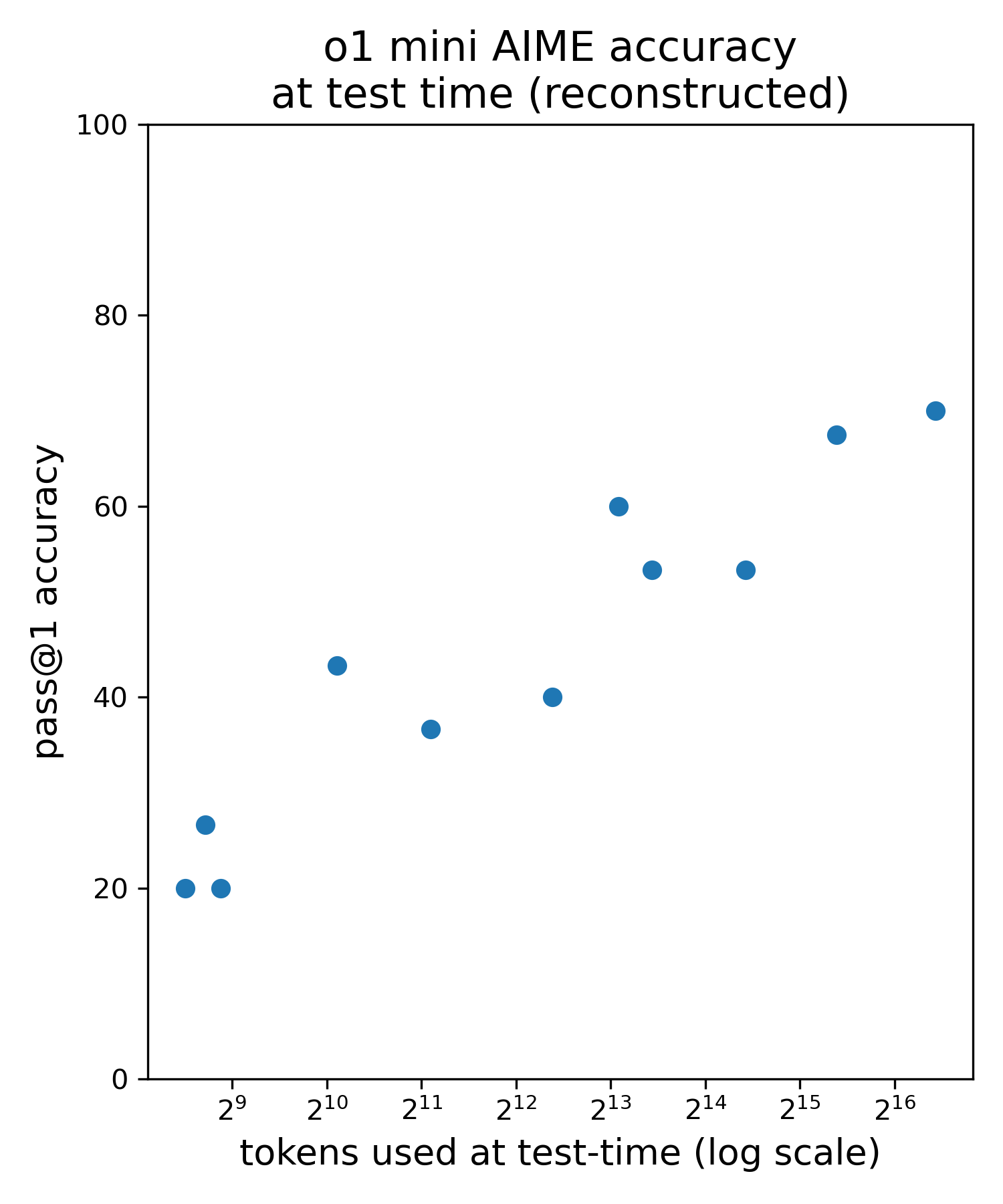

Reconstructing O1 Test-Time Compute Scaling Laws

Hugh Zhang, Celia Chen Reconstructed o1 test-time scaling laws using public API access to o1-mini. |

|

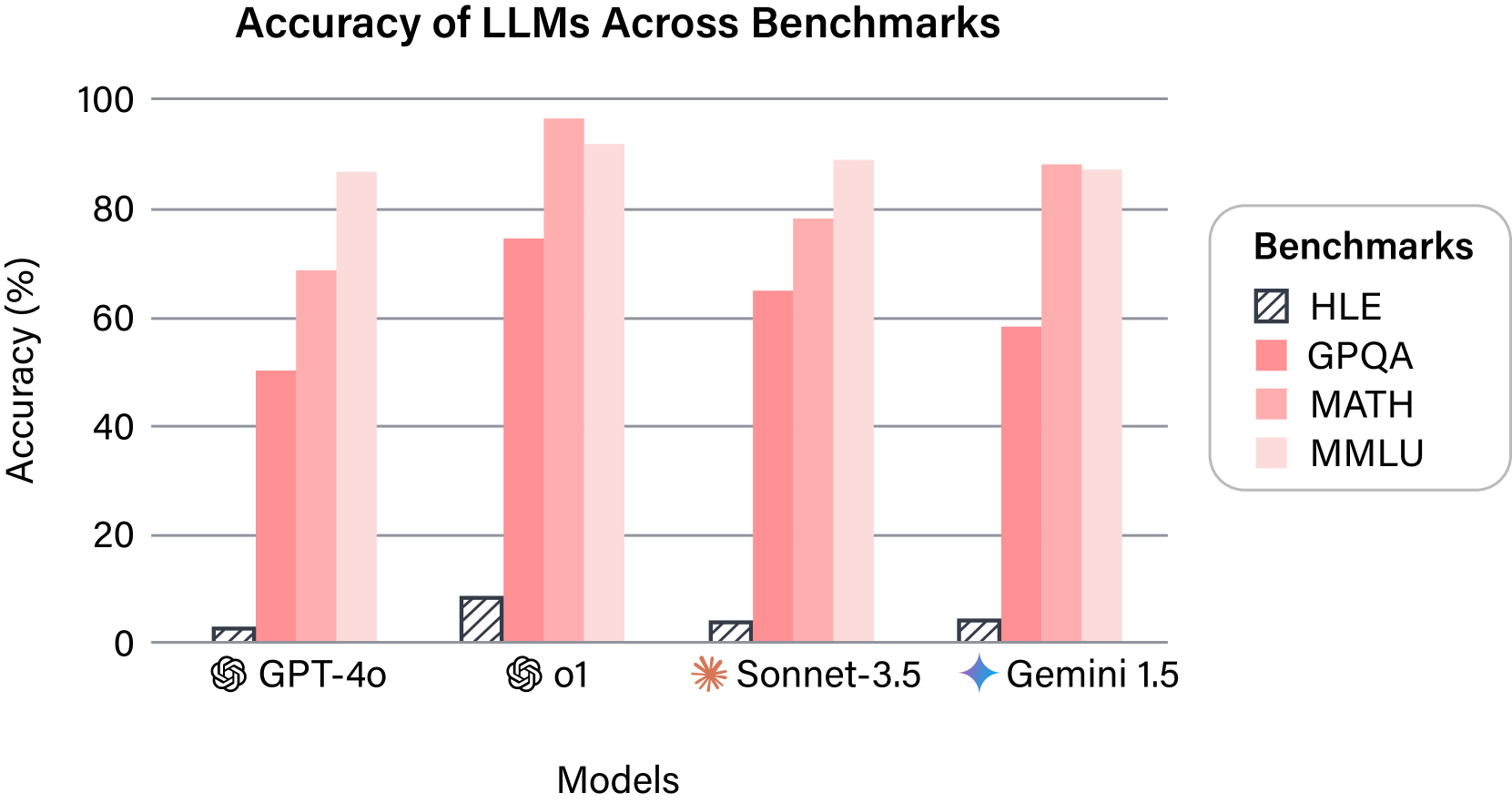

Humanity's Last Exam

Long Phan*, Alice Gatti*, Ziwen Han*, Nathaniel Li*, Josephina Hu, Hugh Zhang, Chen Bo Calvin Zhang, Mohamed Shaaban, John Ling, Sean Shi, and 1109 others Nature, 2026 website A frontier AI benchmark. |

|

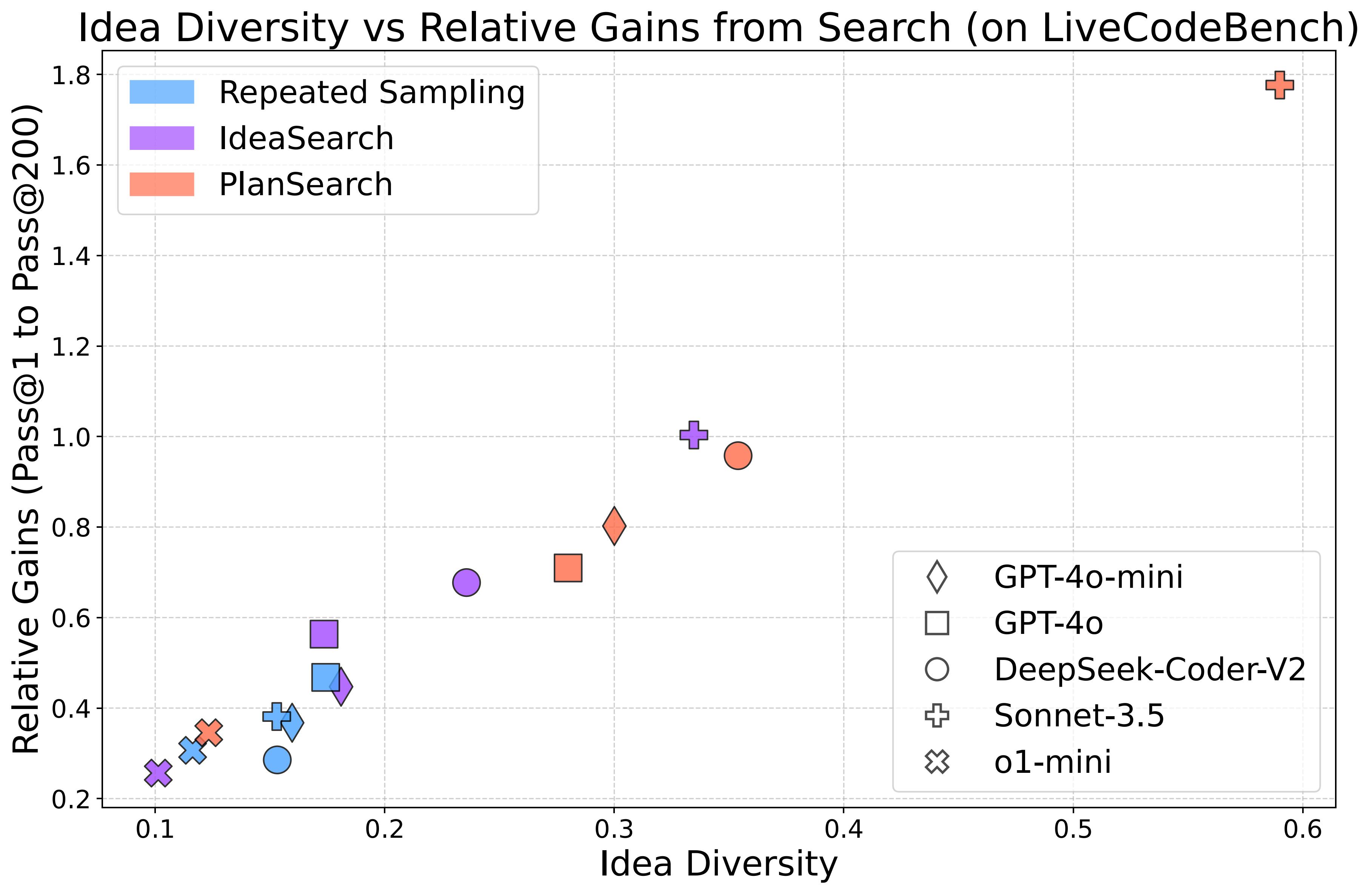

Planning In Natural Language Improves LLM Search For Code Generation

Evan Wang, Federico Cassano, Catherine Wu, Yunfeng Bai, Will Song, Vaskar Nath, Ziwen Han, Sean Hendryx, Summer Yue, Hugh Zhang twitter / twitter2 Searching over a diverse set of ideas/plans in natural language significantly helps code generation and is far more effective than repeated sampling. |

|

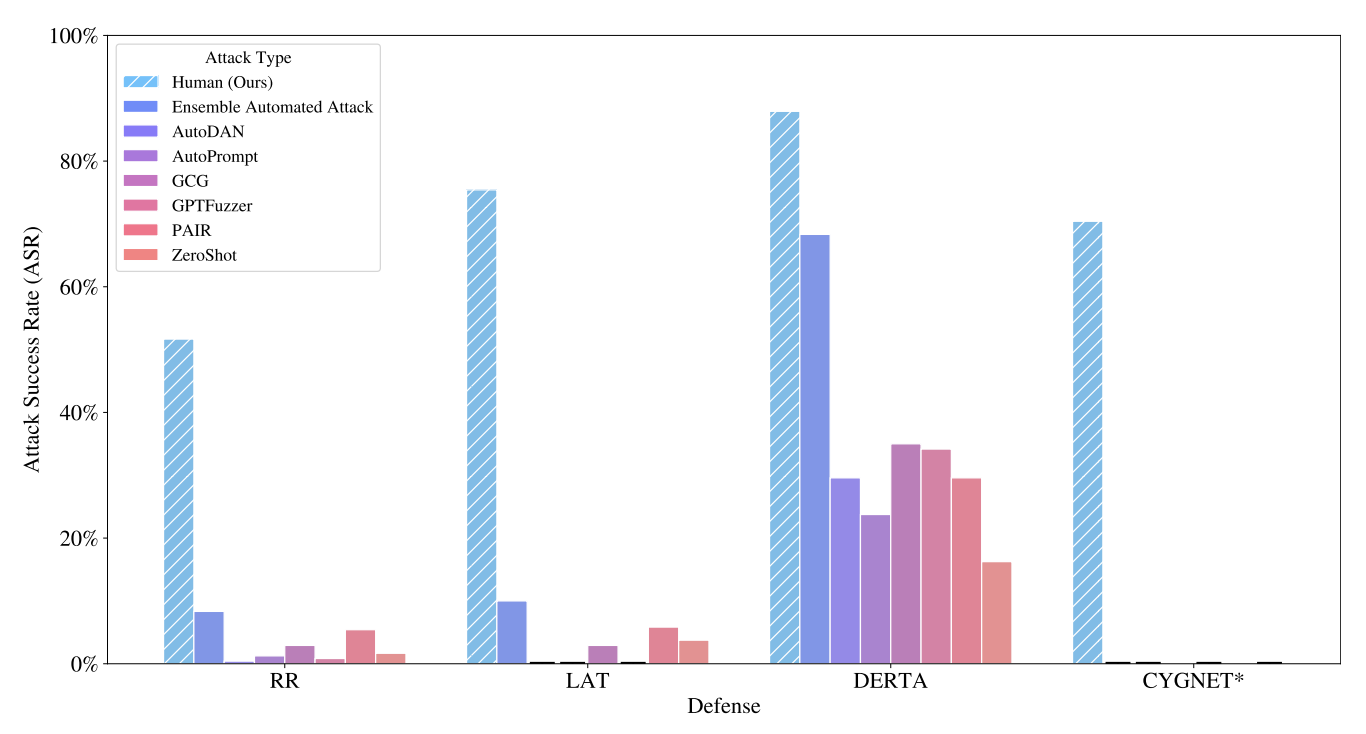

LLM Defenses Are Not Robust to Multi-Turn Human Jailbreaks Yet

Nathaniel Li, Ziwen Han, Ian Steneker, Willow Primack, Riley Goodside, Hugh Zhang, Zifan Wang, Cristina Menghini, Summer Yue Red Teaming GenAI Workshop @ NeurIPS, 2024 We demonstrate that multi-turn human jailbreaks can achieve >70% success rates against LLM defenses that report single-digit success rates for automated single-turn attacks. |

|

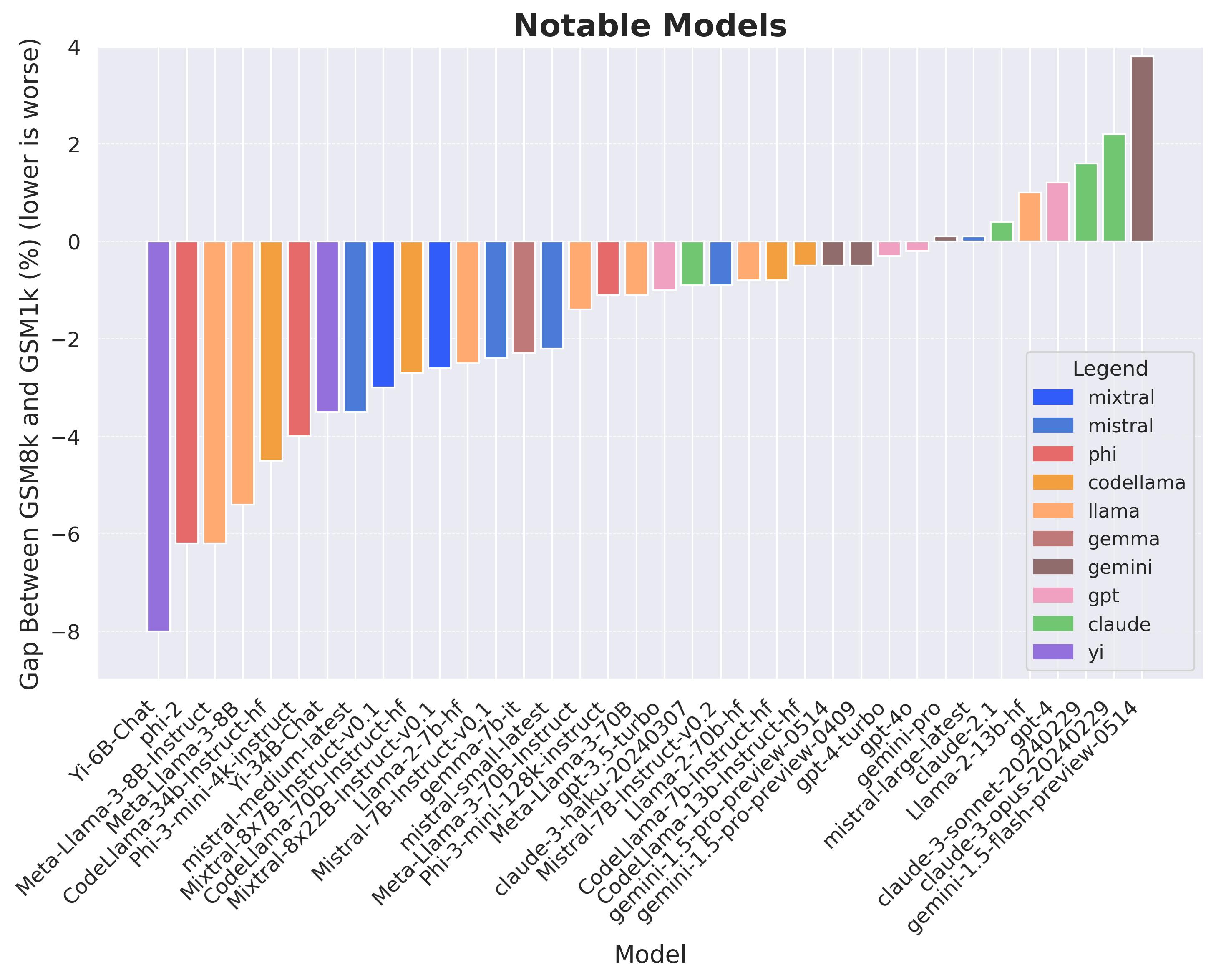

A Careful Examination of Large Language Model Performance on Grade School Arithmetic

Hugh Zhang, Jeff Da, Dean Lee, Vaughn Robinson, Catherine Wu, Will Song, Tiffany Zhao, Pranav Raja, Dylan Slack, Qin Lyu, Sean Hendryx, Russell Kaplan, Michele Lunati, Summer Yue NeurIPS Spotlight (Datasets and Benchmarks Track), 2023 talk / slides / twitter / twitter2 / twitter3 / twitter4 We clone GSM8k to measure dataset contamination. Some models show signs of overfitting, but frontier models show strong generalization. |

|

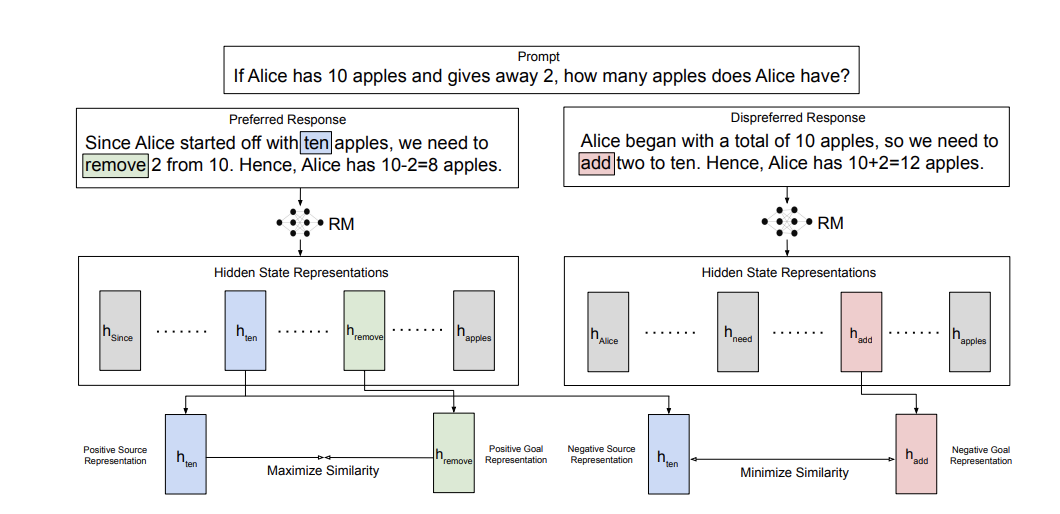

Learning Goal-Conditioned Representations for Language Reward Models

Vaskar Nath, Dylan Slack, Jeff Da, Yuntao Ma, Hugh Zhang, Spencer Whitehead, Sean Hendryx NeurIPS, 2024 Representation learning may be useful for post-training LLMs. |

|

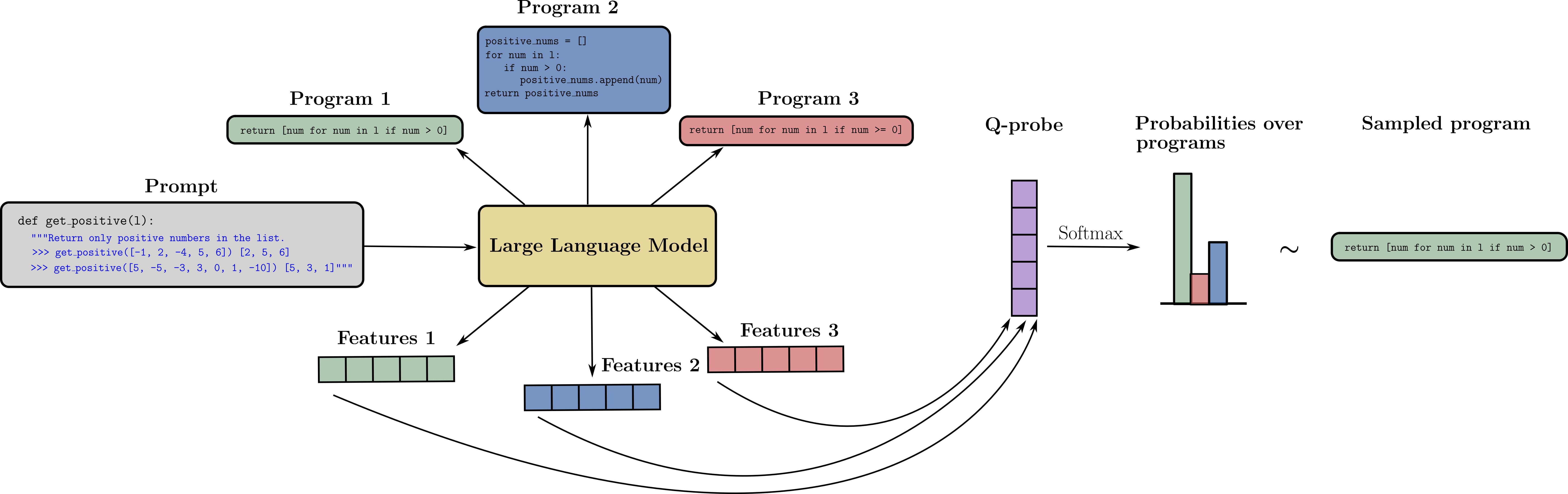

Q-Probe: A Lightweight Approach to Reward Maximization for Language Models

Kenneth Li, Samy Jelassi, Hugh Zhang, Sham Kakade, Martin Wattenberg, David Brandfonbrener A lightweight alternative to fine-tuning that performs better than LORA for very small datasets and requires minimal model access. |

|

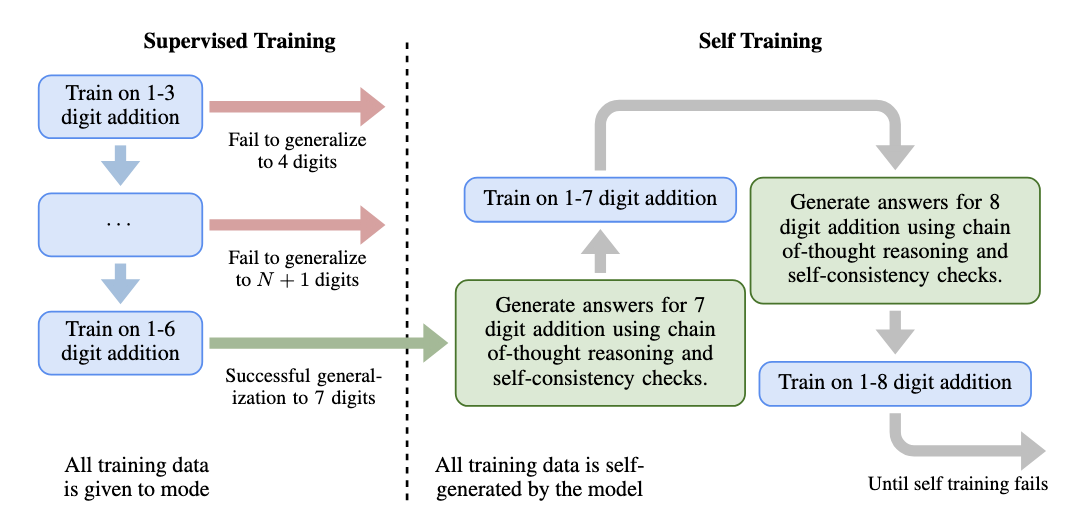

Chain-of-Thought Reasoning is a Policy Improvement Operator

Hugh Zhang, David C. Parkes Workshop on Instruction Tuning and Instruction Following at NeurIPS 2023 twitter / slides / poster Training on chain-of-thoughts that lead to a correct answer can help a LLM self-improve and generalize far beyond their original capabilities in the toy environment of addition. |

|

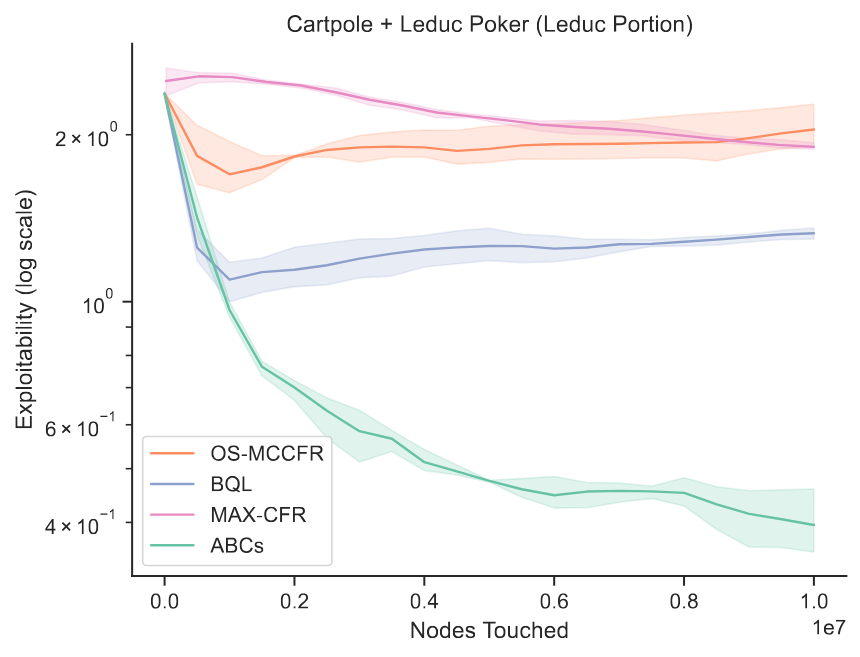

Easy as ABCs: Unifying Boltzmann Q-Learning and Counterfactual Regret Minimization

Luca D'Amico-Wong*, Hugh Zhang*, Marc Lanctot, David C. Parkes code Unified algorithm for both reinforcement learning and game theory. Can solve MDPs as fast as RL methods and imperfect-information games as fast as CFR using the single set of hyperparameters. |

|

Human-Level Play In The Game Of Diplomacy By Combining Language Models With Strategic Reasoning

Anton Bakhtin*, Noam Brown*, Emily Dinan*, Gabriele Farina*, Colin Flaherty*, Daniel Fried*, Andrew Goff*, Jonathan Gray*, Hengyuan Hu*, Athul Paul Jacob*, Mojtaba Komeili*, Karthik Konath*, Adam Lerer*, Mike Lewis*, Alexander H. Miller*, Sasha Mitts*, Adithya Renduchintala*, Stephen Roller*, Dirk Rowe*, Weiyan Shi*, Joe Spisak*, Alexander Wei*, David Wu*, Hugh Zhang*, Markus Zijlstra* Science, 2022 paper / blog / nyt / economist / gizmodo / forbes / new scientist / ars technica / mit tech review / kotaku / engadget / register / hacker news / reddit Human level performance in the game of Diplomacy, where agents negotiate with other humans in natural language. |

|

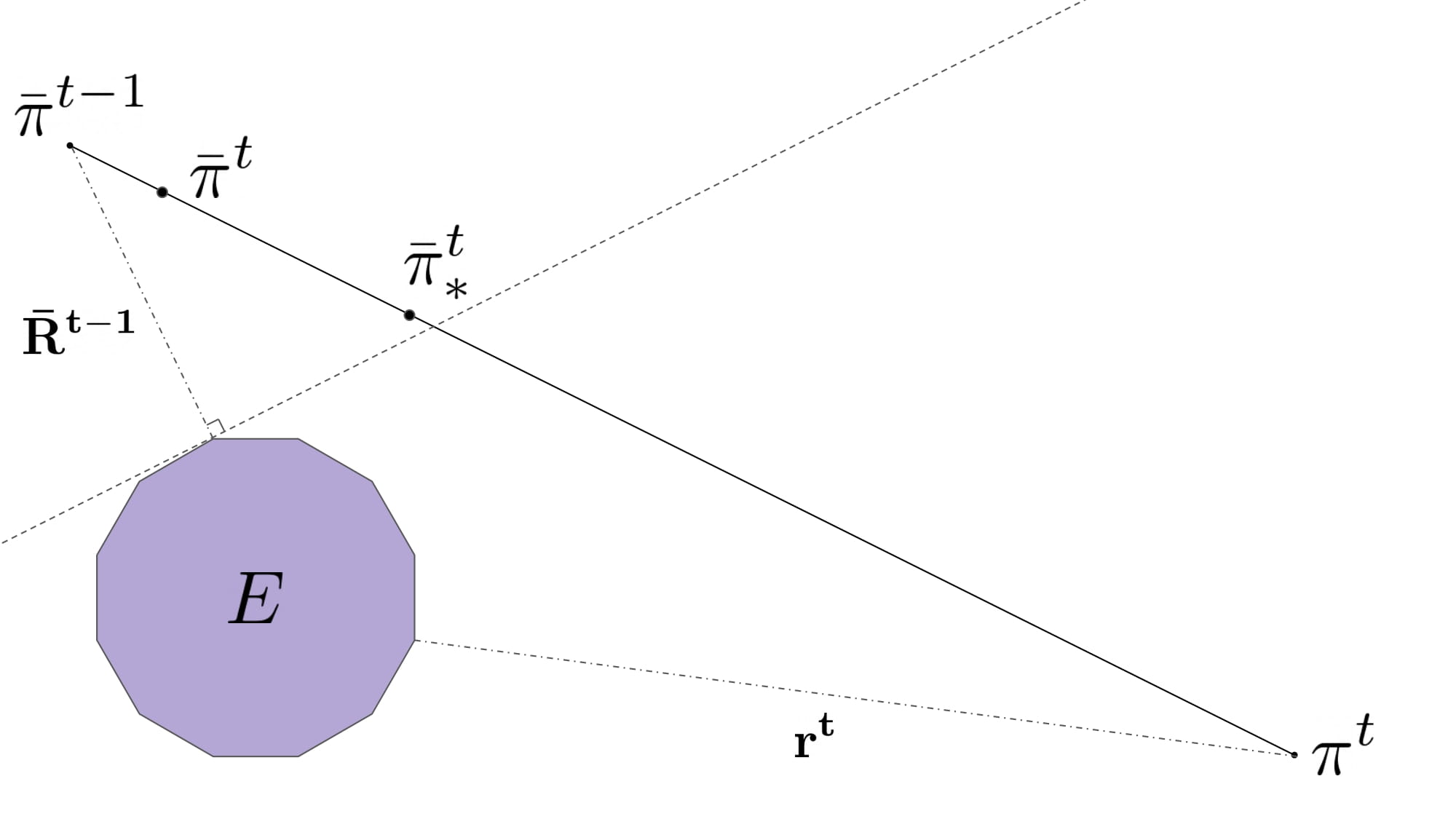

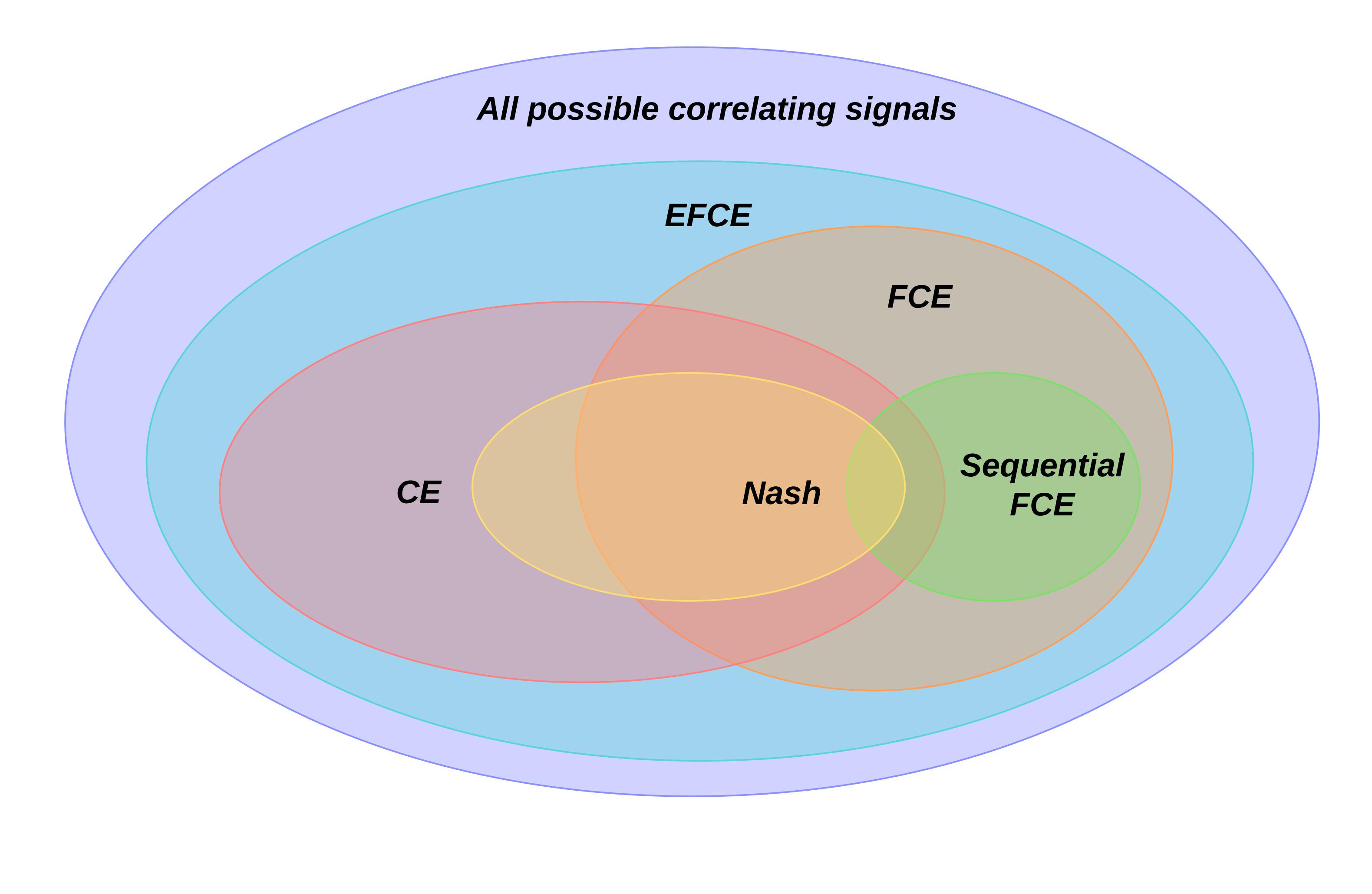

Equilibrium Finding in Normal-Form Games Via Greedy Regret Minimization

Hugh Zhang, Adam Lerer, Noam Brown Association for the Advancement of Artificial Intelligence (AAAI), 2022 A novel no-regret learning procedure that converges to correlated and coarse-correlated equilibria several orders of magnitude faster than previous methods in randomly generated normal-form games. |

|

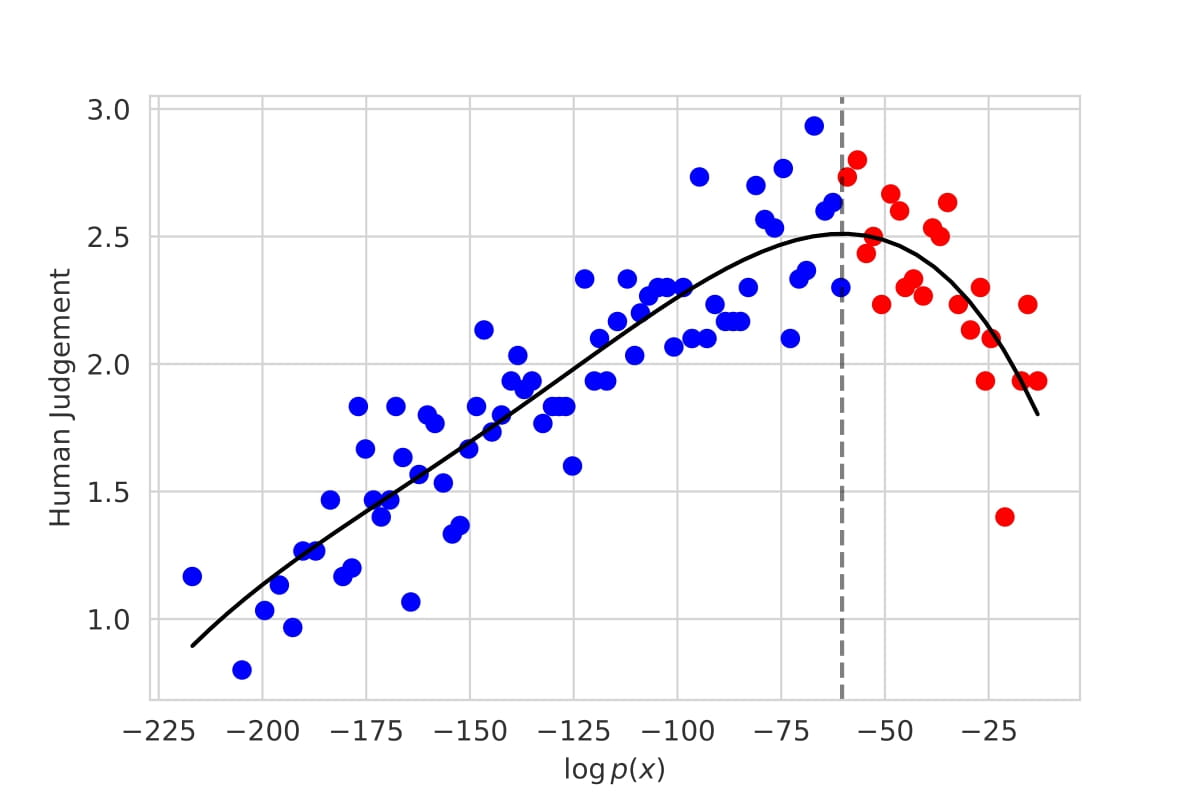

Trading Off Diversity and Quality in Natural Language Generation

Hugh Zhang*, Daniel Duckworth*, Daphne Ippolito, Arvind Neelakantan Workshop on Human Evaluation of Natural Language Processing Systems at the Conference of the European Chapter of the Association for Computational Linguistics (HumEval Workshop @EACL), 2021 The first large-scale evaluation of decoding methods for large language models along the entire quality-diversity spectrum. |

|

A Simple Adaptive Procedure Converging to Forgiving Correlated Equilibria

Hugh Zhang (advised by Gabriel Carroll) Stanford Senior Honors Thesis in Economics, 2020 (John G. Sobieski Award for Creative Thinking) Alongside Celli et al. (2020) (concurrent work), this paper gives the first internal regret minimization dynamics for extensive-form games. |

|

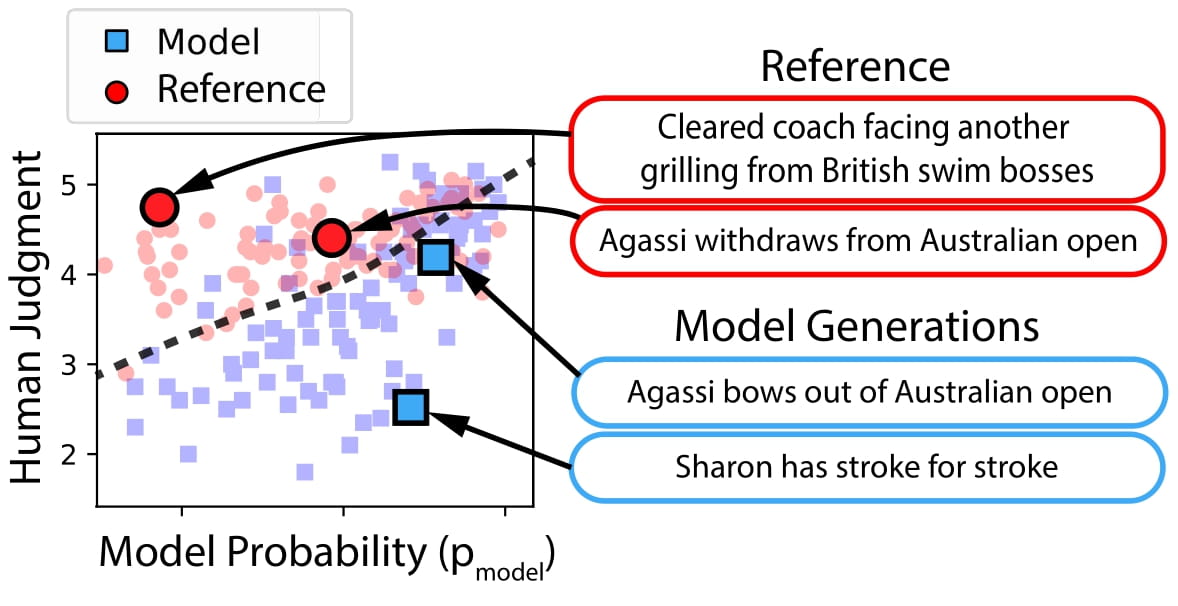

Unifying Human and Statistical Evaluation for Natural Language Generation

Tatsunori Hashimoto* , Hugh Zhang*, Percy Liang North American Chapter of the Association for Computational Linguistics (NAACL), 2019 (Oral Presentation) Existing language models can generate either high quality or diverse utterances, but not both simultaneously. How can we measure that in a single metric? |

|

Thanks to Jon Barron for this website's template. |